Research

Sampling-based motion planning

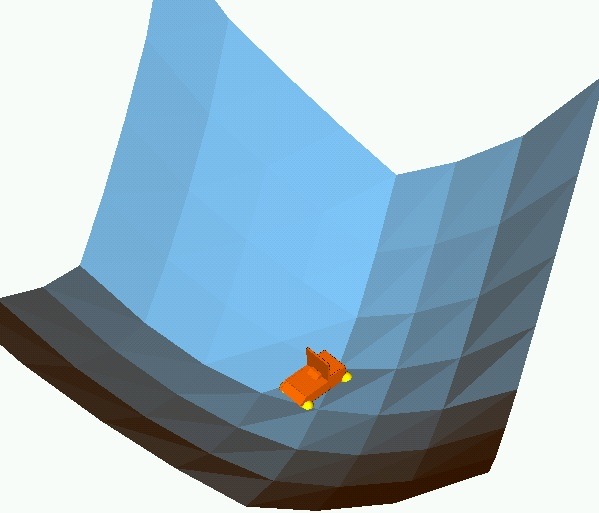

I have worked extensively on robot motion planning, focusing on a particular type of algorithms that rely on sampling random robot configurations. These algorithms have been shown to be effective at efficiently computing paths for a variety of complex systems. With several collaborators, I have developed new algorithms that are tailored for robots with many degrees of freedom and/or complex dynamics. Implementations of these algorithms are typically made available through the Open Motion Planning Library.

Recently, we have shown that many previously proposed algorithms for planning with geometric constraints (such as end-effector constraints) can be generalized by decoupling the motion planning strategy from the constraint adherence methodology. This enables us to easily optimize the combination of planning strategy and constraint adherence for a particular robot or application.

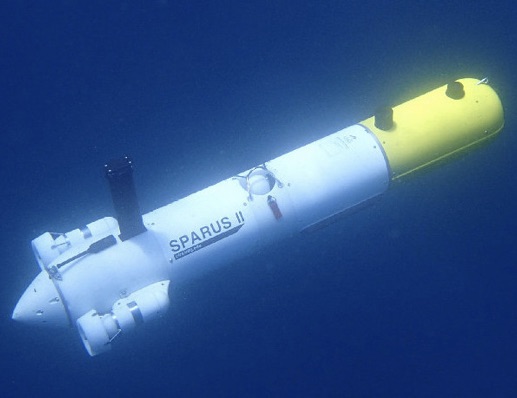

I have also been working with the University of Girona on algorithms that can be deployed on their Sparus II AUV. This robot has complex dynamics constraints, limited computational power, and is deployed in an initially unknown environment. On top of that, there are currents to deal with and very noisy sensor data. We have developed novel, practical online planning algorithms that produce high-quality solutions within a fixed time budget.

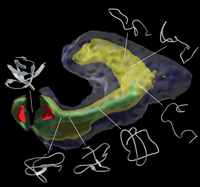

Modeling conformational changes in proteins

Many large protein complexes undergo extensive conformational changes as part of their functionality. Tracing these changes is important for understanding the way these proteins function. We have developed a novel computational methodology to efficiently model the conformational changes in biological macromolecules. The methodology borrows from robot motion planning techniques. A key component of the approach is the parametrization of the degrees of freedom in such a way that energetically favorable conformational changes are favored by the motion planning-inspired conformational sampling algorithm.

Functional annotation of proteins

Broad and extensive knowledge of the biological function of proteins would have immense practical impact on the identification of novel drug targets, the reduction of potential side effects, and on finding the molecular causes of disease. Unfortunately, the experimental determination of protein function is an expensive and time-consuming process. In an effort to accelerate and guide the experimental process, computational techniques have been developed to annotate functional information about well-studied proteins onto predictably similar but less-studied proteins.

We are currently investigating the extent that patterns in the structure and physicochemical properties of small sets of residues can be used to predict function. Specifically, we have developed algorithms for the following problems:

The prediction of protein function. Given a small spatial arrangement of residues characteristic of some function (a motif), we are able to quickly identify with high sensitivity and specificity all proteins in the entire Protein Data Bank with that function.

The identification of patterns of variation within the structures available for a protein family. These patterns are often correlated with phylogeny or binding mode. Such patterns can be exploited to further improve the predictions in the previous problem.

The prediction of inhibitor affinity for the entire human kinome. Kinases are all very similar to each other and are involved in many different biological processes. Being able to predict affinity of putative inhibitors is useful in computational drug design. Here, we do not assume a motif is known. Instead, we exhaustively explore all combinations of binding site residues whose structure and physicochemical properties are predictive of binding some inhibitor. There is not just one motif; the specificity-determining residues are inhibitor dependent.

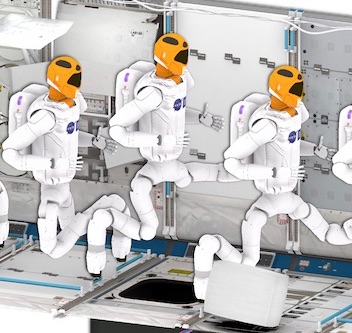

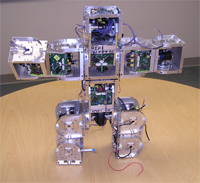

Self-reconfigurable robots

Over the years many different robots have been developed for specific tasks. Self-reconfigurable robots are the only truly general purpose robots. For a given task, they can re-arrange themselves to perform that task. For instance, a number of modules can form an arm to help astronauts perform tasks during space walks, they can be reconfigured into planetary rover, form a network of communication beacons, and collect samples. At the Polymorphic Robotics Laboratory I worked on a system of self-reconfigurable robots called SuperBot. I was in charge of the software development, both on the modules and the simulator code.

Protein folding analysis

The definition of reaction coordinates for the characterization of a protein folding reaction has long been a controversial issue, even for the “simple” case where one single free energy barrier separates the folded and unfolded ensemble. Together with Lydia Kavraki’s group and Cecilia Clementi’s group we have developed a general approach to this problem to obtain a few collective coordinates by using nonlinear dimensionality reduction. We validated the usefulness of this method by characterizing the folding landscape associated with a coarse-grained protein model of src homology 3 as sampled by molecular dynamics simulations. The folding free-energy landscape projected on the few relevant coordinates emerging from the dimensionality reduction can correctly identify the transition-state ensemble of the reaction. The first embedding dimension efficiently captures the evolution of the folding process along the main folding route. These results clearly show that the proposed method can efficiently find a low-dimensional representation of a complex process such as protein folding.

Although the work so far has focused on protein folding, the isomap-based method we have developed is applicable to other domains with large high-dimensional data sets. Especially if the data is non-uniformly sampled, our approach can offer a significant speed-up over plain landmark isomap.

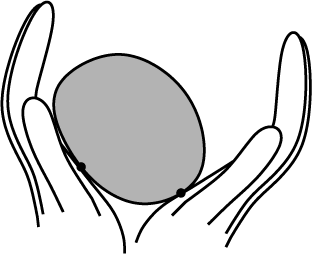

Tactile sensing

Michael Erdmann and I have developed a method to reconstruct the shape of an unknown object using tactile sensors without requiring object immobilization. Instead, the robot manipulates the object without prehension. The robot infers the shape, motion and center of mass of the object based on the motion of the contact points as measured by tactile sensors. This allows for a natural, continuous interaction between manipulation and sensing. Eventually, it will make robots more capable in the physical world by enabling them to pick up unknown objects.

We have analyzed several different cases of the tactile shape reconstruction problem. First, we considered planar shapes with quasistatic dynamics. Simulations and experiments have validated the analytic results. Next, we extended the analysis to the full dynamics and prove observability of the nonlinear system describing the shape and motion of the object being manipulated. In our simulations, a simple observer based on Newton’s method for root finding can recover unknown shapes with almost negligible errors. Using the same framework we can also describe the shape and dynamics of three-dimensional objects. However, there are some fundamental differences between the planar and three-dimensional case, due to increased tangent dimensionality. Also, perfect global shape reconstruction is impossible in the 3D case, but it is almost trivial to obtain upper and lower bounds on the shape. The 3D shape reconstruction method has also been implemented and we present some simulation results.

Parts orienting

In parts orienting the goal is to bring a known part in an unknown orientation to a known orientation. Often this is done without any sensing. I have worked on this problem at the macro-level and the micro-level. At the macro-level, I have developed strategies to orient three-dimensional parts with minimal sensing and manipulation. That is, we would like to bring a part from an unknown position and orientation to a known orientation (but possibly unknown position) with minimal means. In general, it is not possible to orient a part completely without sensors, but it is sufficient if a particular orienting strategy can bring a part into one particular orientation with high probability. The sensing is then reduced to a binary decision: a sensor only has to detect whether the part is in the right orientation or not. If not, the part is fed back to the parts orienting device. Assuming the orienting strategy succeeds with high probability, on average it takes just a few tries to orient the part. An alternative view of this type of manipulation is to consider it as manipulation of the pose distribution. The goal then is to find the pose distribution with minimal entropy, thereby maximally reducing uncertainty.

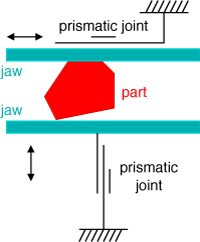

I worked with Ken Goldberg at UC Berkeley on orienting parts at the micrometer scale. As microelectromechanical systems (MEMS) become ubiquitous, the need to orient micro-scale parts becomes more important. At this scale sticking effects complicate hand-off motions and the degrees of freedom of micro-manipulators are limited. We found that polygonal parts can be oriented with a parallel jaw gripper using two simple primitives: squeezing and rolling the part between the jaws. The gripper does not need to be reoriented and no sensing is required. I developed an algorithm that finds a plan of length O(n) in O(n) time for orienting a polygonal micro-scale part with n vertices. To the best of my knowledge this is the first algorithmic approach to micromanipulation.